Project Case Study

Autograd Engine (From Scratch)

Updated: May 2026

View Repository →Built a reverse-mode autodiff engine from scratch to understand how computation graphs are constructed and executed. Focused on correctness, scalability, and system-level trade-offs in gradient computation.

- • ~1e-10 gradient error

- • Scales to 10,000+ nodes

- • Iterative DFS (no recursion limit)

Problem

Modern ML frameworks abstract away gradient computation, making it difficult to understand how backpropagation and graph execution work internally. This project rebuilds autograd from first principles to analyze system behavior and execution trade-offs.

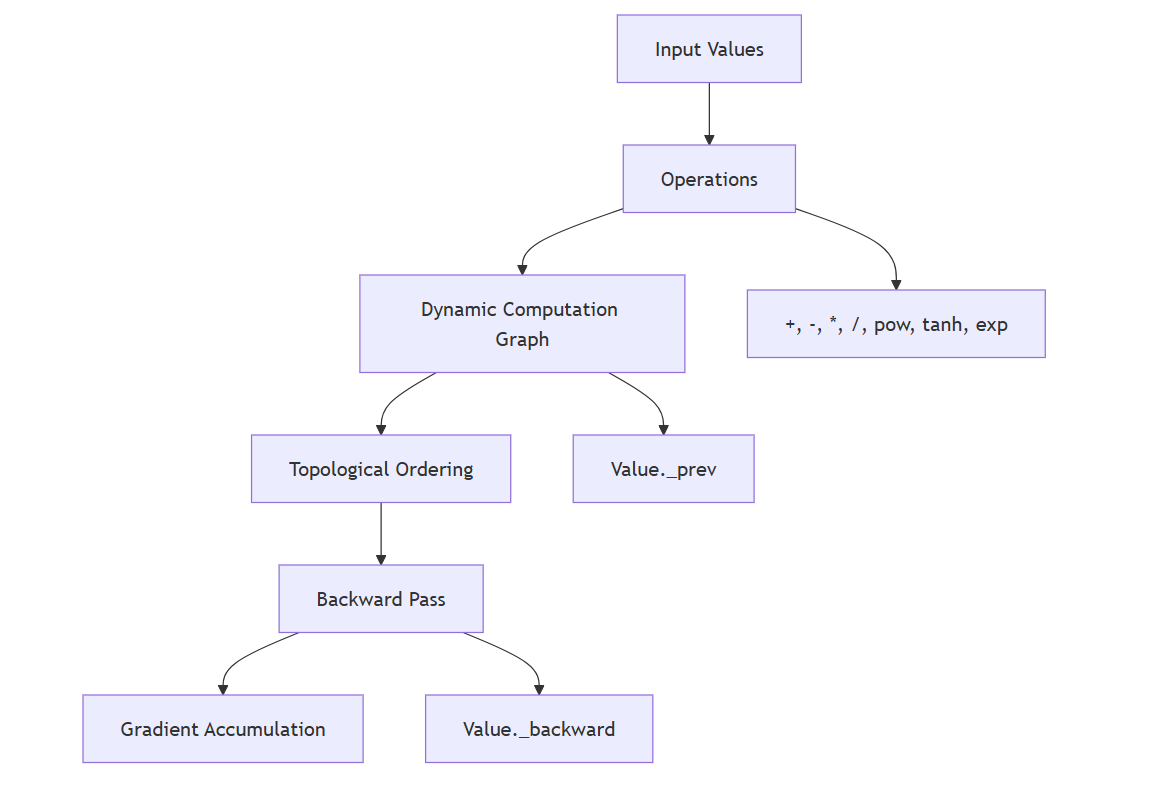

System Design

- • Dynamic computation graph (define-by-run)

- • Reverse-mode autodiff using topological traversal

- • Gradient accumulation across dependency paths

Architecture

The system builds a dynamic computation graph during forward execution and performs reverse-mode differentiation using topological traversal.

Results & Insights

- • Gradient correctness validated (~1e-10 error)

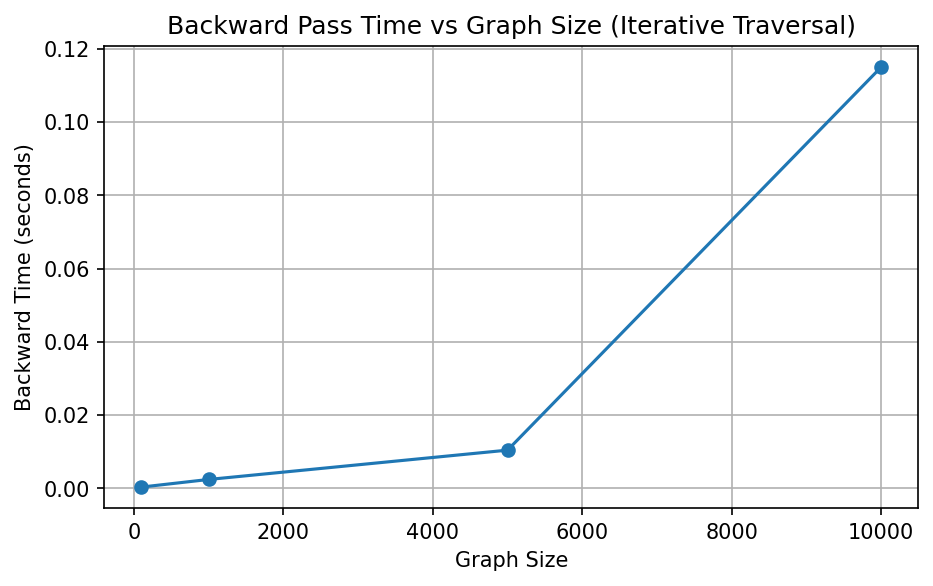

- • Backward pass scales approximately linearly with graph size

- • Recursive traversal introduced depth limits (~1k nodes)

- • Replaced recursion with iterative DFS, scaling to 10,000+ nodes

- • Observed trade-offs between flexibility, memory usage, and execution efficiency

Benchmark

Backward pass performance increases with graph size, demonstrating near-linear scaling behavior.

Takeaway: Autograd performance depends not only on mathematical correctness, but also on graph traversal strategy, memory behavior, and execution overhead.

Technical Stack

Python · NumPy · Autograd · Backpropagation · Performance Analysis