Project Case Study

Benchmark Guardian

Updated: May 2026

View Repository →Built a developer infrastructure platform for automated benchmark regression detection, multi-metric performance analysis, and GitHub pull request feedback.

- • GitHub App + webhook integration

- • Multi-metric regression detection engine

- • Automated PR feedback workflows

Problem

Performance regressions in ML infrastructure and backend systems are often difficult to detect during code review. Latency, memory usage, throughput, and scaling efficiency can silently degrade without failing tests.

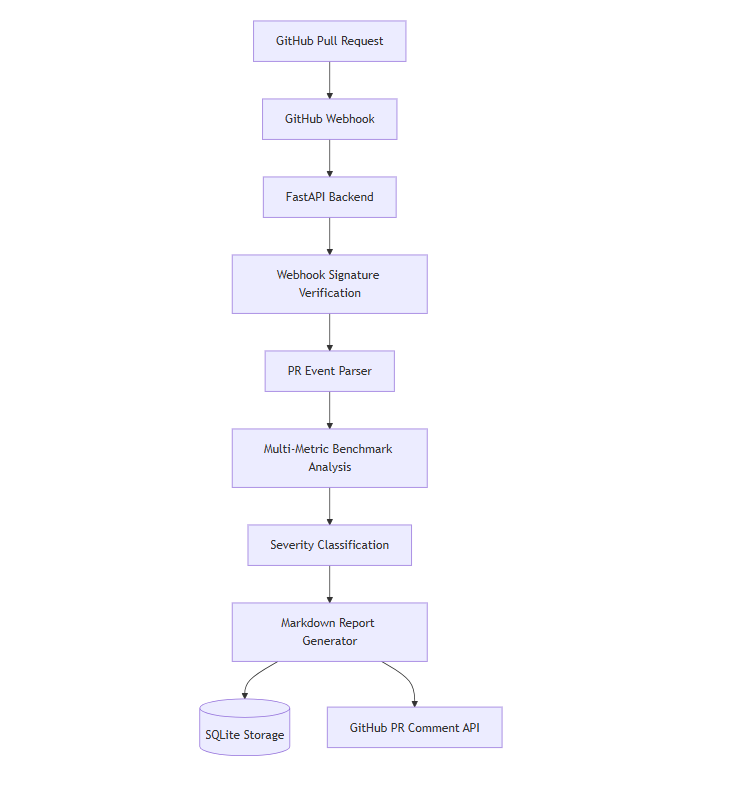

System Design

- • GitHub App + webhook-driven architecture

- • Secure webhook signature verification

- • Multi-metric benchmark comparison engine

- • Severity classification and regression detection

- • Automated PR comment generation

- • SQLite persistence layer for benchmark storage

Architecture

Event-driven backend architecture that processes GitHub pull request events, analyzes benchmark regressions, and publishes automated developer feedback.

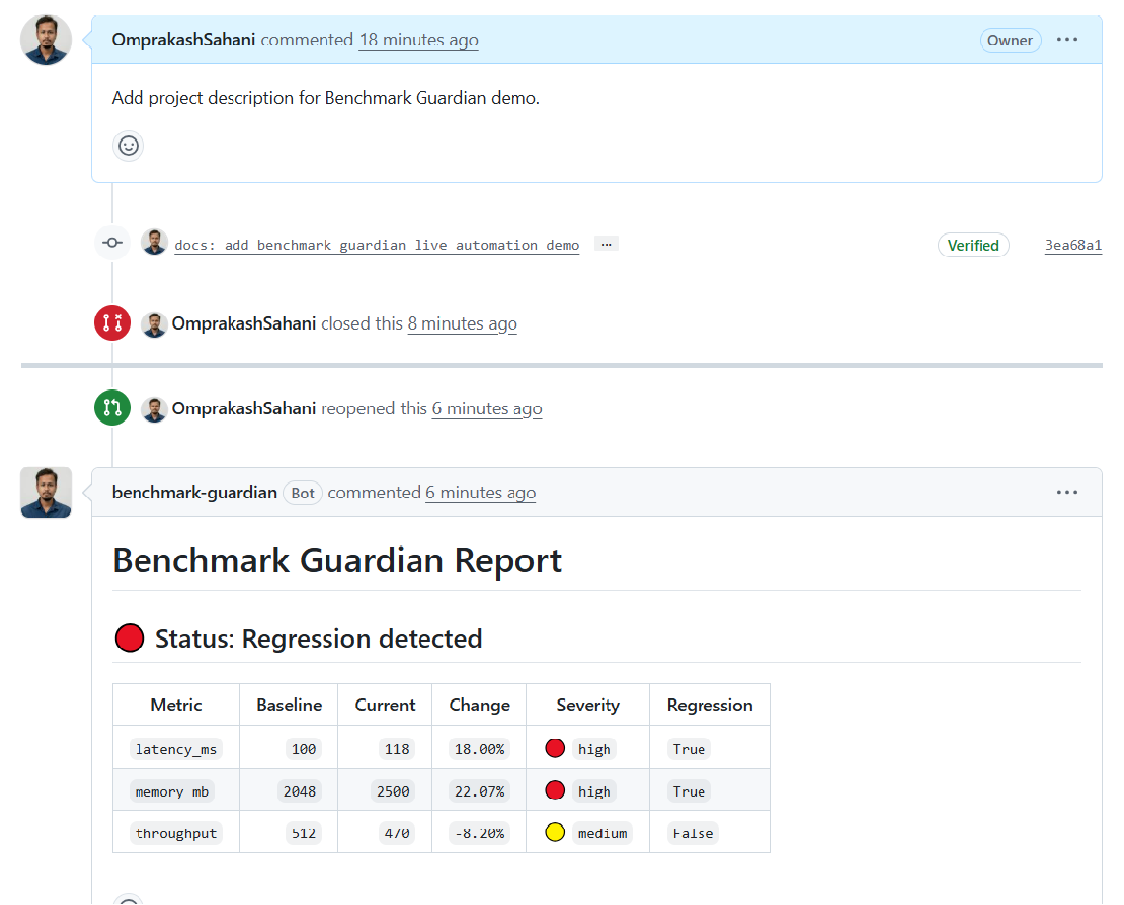

Live GitHub Integration

Benchmark Guardian automatically analyzes pull request benchmarks and posts regression reports directly into GitHub discussions.

Results & Insights

- • Automated detection of latency, memory, and throughput regressions

- • Enabled benchmark feedback directly within pull request workflows

- • Classified regression severity across multiple performance dimensions

- • Demonstrated infrastructure-oriented performance observability workflows

Example Benchmark Report

latency_ms: +18% → HIGH regression memory_mb: +22% → HIGH regression throughput: -8% → MEDIUM regression

Takeaway: Performance regressions in ML infrastructure require automated, systems-aware analysis integrated directly into developer workflows.

Technical Stack

Python · FastAPI · GitHub Apps · Webhooks · SQLite · Performance Analysis