← Back to home

Project Case Study

ML Reproducibility Auditor

Updated: May 2026

Built a CLI-based system to evaluate machine learning repositories for reproducibility, engineering quality, and ML systems design patterns using GitHub API-based analysis.

- • Reproducibility scoring system

- • GitHub API-based analysis

- • ML systems pattern detection

Problem

Many ML repositories lack reproducibility, clear environment setup, and engineering discipline. Evaluating their reliability and system design quality is difficult without manual inspection.

System Design

- • GitHub API-based repository inspection without cloning

- • Structure analysis for CI/CD, benchmarks, datasets, and packaging

- • Code quality and determinism checks

- • ML system pattern detection for PyTorch, distributed training, and all-reduce

- • Scoring engine with reproducibility score and risk classification

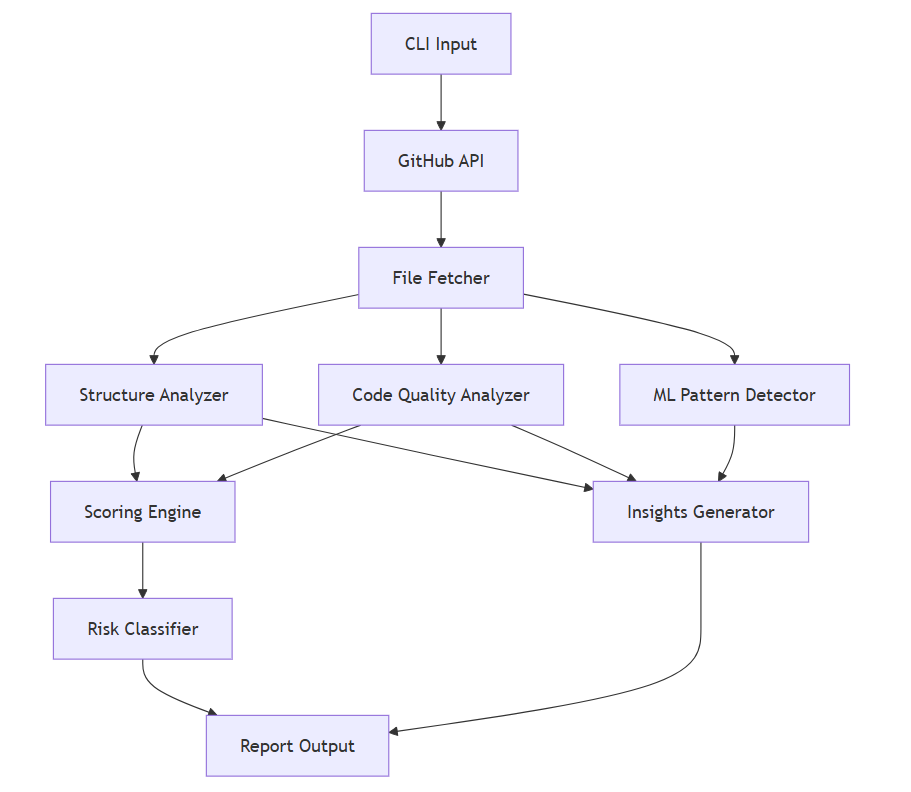

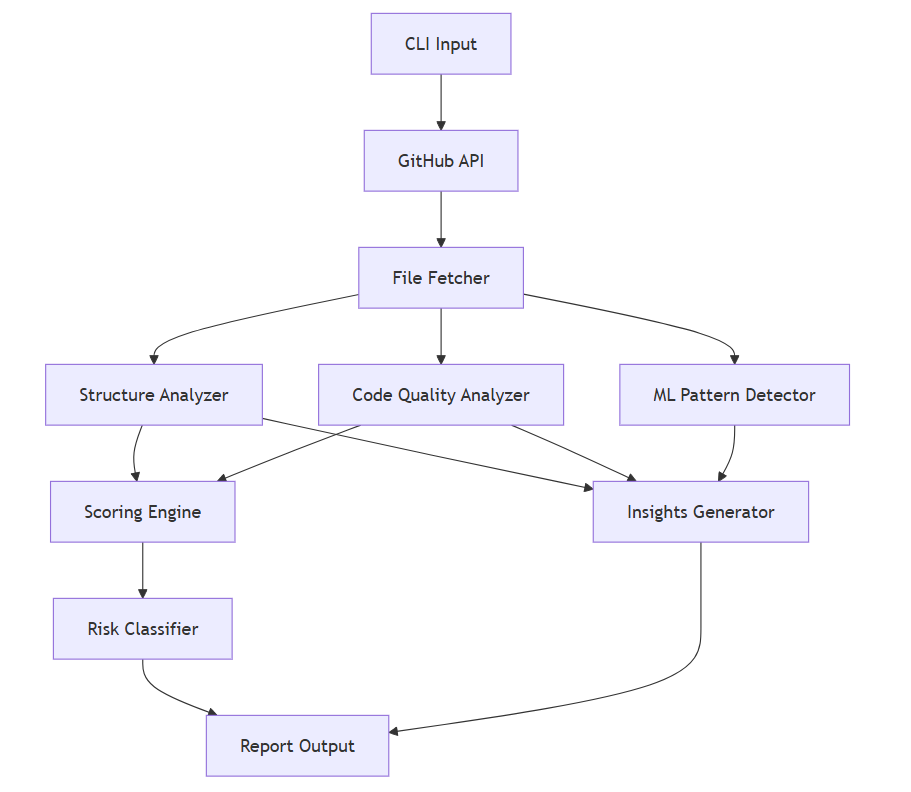

Architecture

The tool fetches repository metadata through the GitHub API, analyzes structure and code signals, scores reproducibility, classifies risk, and generates actionable insights.

Results & Insights

- • Identified missing reproducibility signals such as CI/CD and seed control

- • Detected system-level patterns across real-world ML repositories

- • Provided automated evaluation of engineering maturity

- • Enabled comparison of ML infrastructure practices across projects

Example Output

Reproducibility Score: 7.5/10 Risk Level: MEDIUM Missing CI/CD → Not automatically validated Missing seed control → Not reproducible

Takeaway: Reproducibility in ML systems depends on engineering practices, not just model design.

Technical Stack

Python · CLI · GitHub API · Static Analysis · ML Systems